Just now, a new breakthrough in the field of AI in healthcare was announced by Google (GOOGL.US)!

This time, they directly targeted the pain points of real-world clinical environments.

For a long time, medical models have been like students with unbalanced expertise: they excel at “reading medical records” but struggle with medical images such as CT scans, MRIs, and pathology slides.

For a long time, medical models have been like students with unbalanced expertise: they excel at “reading medical records” but struggle with medical images such as CT scans, MRIs, and pathology slides.

This is because they are forced to use text-based logic to interpret images, leading to low efficiency, frequent errors, and high costs.

To solve this problem, Google introduced its latest model, MedGemma 1.5, which offers a solution to this challenge.

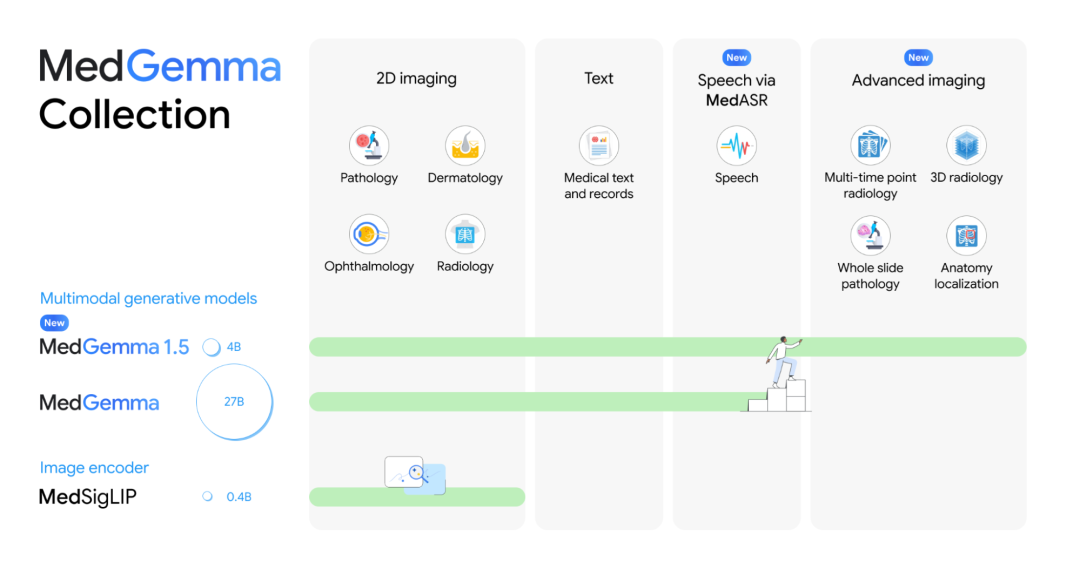

Compared to the previous version of MedGemma 1.5, MedGemma 1.5 has made significant advances in multimodal applications, integrating:

High-dimensional medical imaging: computed tomography (CT), magnetic resonance imaging (MRI) and histopathology.

Longitudinal medical imaging: examination of time series of chest x-rays.

Anatomical localization: Identification of anatomical features on chest radiographs.

Understanding medical documents: extracting structured data from medical laboratory reports.

Google said MedGemma 1.5 is the first major open source multimodal language model released to the public, capable of interpreting high-dimensional medical data while also possessing the ability to analyze general two-dimensional images and text.

More importantly, MedGemma 1.5 only has 4 billion settings, which means it can run smoothly on ordinary consumer graphics cards or even high-performance workstations.

Not only that, but Google also released MedASR, a speech recognition model specifically tailored for medical voice applications, capable of converting conversations between doctors and patients into text and integrating seamlessly with MedGemma.

Simply put, MedGemma 1.5 addresses “how to interpret images”, while MedASR addresses “how to process audio”.

This is not just a simple model iteration, but rather a systematic answer from Google to the question of “how to effectively bring AI into the clinic.”

An AI doctor capable of carefully reading medical records, understanding imaging, and clearly recognizing speech will soon enter every hospital.

AI healthcare is entering the multimodal era.

Over the past year, we have witnessed impressive performance from models like GPT-5 in medical exams, but their performance often falls short in real-world clinical scenarios.

An important reason lies in the fragmentation of information dimensions.

Many medical models, including the first generation MedGemma, are essentially “text experts” with limited ability to interpret images, leading to a loss of diagnostic information.

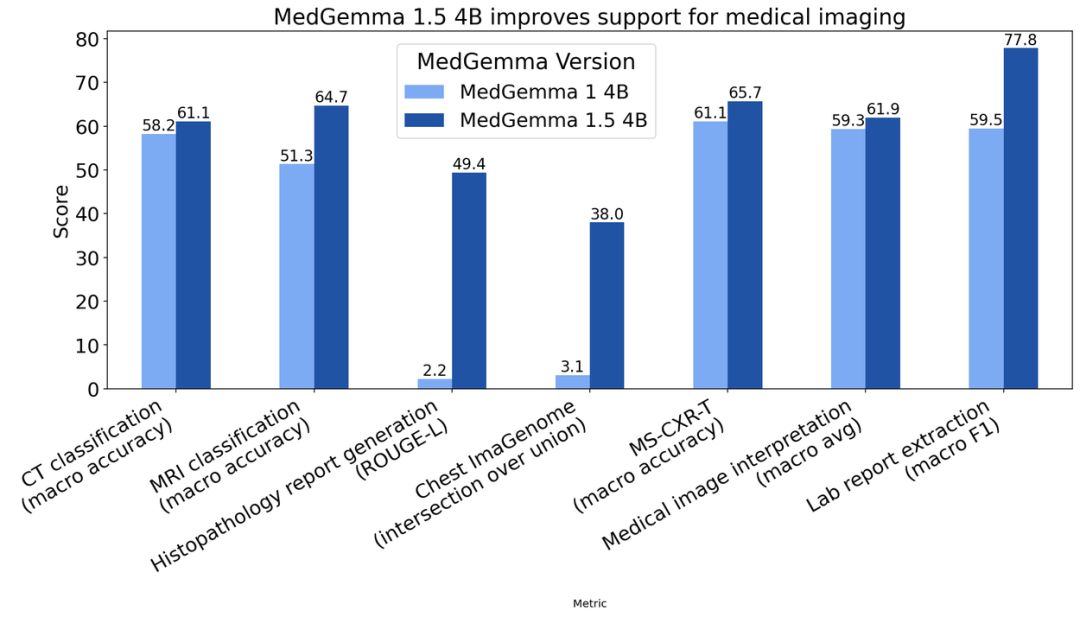

MedGemma 1.5 delivers comprehensive, multidimensional performance improvements in medical imaging applications, significantly surpassing its predecessor.

For large-scale medical imaging, MedGemma 1.5 achieved the following results:

CT disease classification accuracy increased from 58% to 61%.

The accuracy of MRI disease classification has improved from 51% to 65%, with particularly significant progress in identifying complex anatomical structures such as the brain and joints.

The ROUGE-L score for whole-slide pathological descriptions improved from an almost negligible 0.02 to 0.49, reaching the level of the specialized PolyPath model (0.498) and enabling the generation of clinically usable histological descriptions.

Figure: Improved performance of MedGemma 1.5 in medical imaging

For longitudinal time series image analysis, MedGemma 1.5 achieved the following results:

On the MS-CXR-T time series evaluation benchmark, macro accuracy improved from 61% to 66%.

It effectively captures dynamic changes in lesions, for example to determine whether infiltration from pneumonia is being absorbed, facilitating follow-up decision-making.

For general interpretation of 2D medical images, MedGemma 1.5 provided the following results:

On the full internal single-image benchmark (covering radiographs, skin, fundus, and pathology slides), overall classification accuracy increased from 59% to 62%.

This demonstrates that the model retains its broad 2D capabilities without compromising fundamental performance due to the addition of higher-dimensional tasks.

For structured medical documents, MedGemma 1.5 achieved the following results:

The macro-average F1 score for extracting test items, values, and units from PDFs or unstructured text increased from 60% to 78% (+18%).

Automatic construction of structured databases fills the final gap in multi-source information fusion analysis involving imaging, text and testing.

Figure: MedGemma 1.5 performance improvement on text tasks

Meanwhile, traditional automatic speech recognition (ASR) models perform like complete novices without medical training when confronted with obscure medical terminology, with extremely high word error rates turning AI transcription into a burden for doctors.

In contrast, the new MedASR automatic speech recognition model, optimized for medical applications, significantly reduces error rates.

The researchers compared the performance of MedASR with the general-purpose ASR model Whisper large-v3.

They found that MedASR reduced dictation error rates for chest X-rays by 58% and errors across different specialties by 82%.

Google is betting billions of dollars on AI-powered healthcare

Google has established a strong presence in the healthcare sector, with its technological reach extending to every corner of the industry.

In terms of investments, Google has funded many life sciences companies through its venture capital division and private equity division.

Among these, AI-based drug discovery has become a key focus for Google. Of the 51 health-related investments made by Google Ventures in 2021, 28 were in drug research and development, or more than half.

On the collaboration front, leveraging its core artificial intelligence and cloud computing services, Google has struck deals with pharmaceutical giants such as Bayer, Pfizer, Servier and institutions like the Mayo Clinic to explore intelligent solutions ranging from drug discovery to clinical treatment.

Internally, in addition to Google Health, Google operates several specialized business units, including Verily and Calico, forming a diverse and robust matrix focused on different areas.

Notably, as a leading artificial intelligence research institution, Google DeepMind has introduced several groundbreaking scientific models, including AlphaFold (protein structure), AlphaGenome (DNA regulation), and C2S-Scale (single cell).

Demis Hassabis, CEO of DeepMind, has been awarded the 2024 Nobel Prize in Chemistry for his contributions to AI-based protein structure prediction.

In recent years, amid the trend toward large language models, Google has developed several large vertical models suitable for healthcare applications.

These models not only help doctors diagnose diseases more accurately, but also provide patients with personalized health recommendations.

The Google team first developed Flan-PaLM, a model that scored 67.6% on the United States Medical Licensing Examination (USMLE), a 17% improvement over the previous best model.

Subsequently, Google released Med-PaLM, which was featured in Nature. After evaluation by professional clinical doctors, Med-PaLM’s accuracy in answering practical questions is almost comparable to human performance.

In 2023, Med-PaLM M, the world’s first major general medical model, was launched. It approaches or outperforms the existing state-of-the-art (SOTA) in 14 testing tasks, including question answering, report generation and summarization, visual question answering, medical image classification, and genomic variant calling.

Last year, Dr. Karen DeSalvo, Google’s chief health officer, announced six breakthroughs, including the TxGemma AI pharmaceutical model, the FDA-approved pulse stop detection feature for smart watches, the “AI Collaborative Scientist” multi-agent system, and a personalized pediatric cancer treatment model.

From medical imaging to drug development, health assistants to wearable devices, Google is redefining the future of healthcare.

This article is reprinted from the official WeChat account “Zhiyaoju”; edited by Yan Wencai of Zhitong Finance.