Digital doctor on a free flat design background. Online medical Q&A concept.

LangPhoto/iStockphoto/Getty Images

hide caption

toggle caption

LangPhoto/iStockphoto/Getty Images

Start reading recent Internet conversations about AI and you’ll discover a story that comes up with increasing frequency: ChatGPT provided medical advice that saved lives.

“Three weeks ago I woke up from a nap and found red spots on my legs,” one such journal account begins. video by Bethany Crystal, who runs a consulting business and lives in New York. After an exchange with ChatGPT, she recounts this by telling him: “You need immediate evaluation for possible bleeding risk. »

“What followed was a harrowing three-day experience that became increasingly frightening,” says Crystal, who was eventually diagnosed with a rare autoimmune disease called immune thrombocytopenic purpura, which can lead to low platelets and increased bleeding. She says she might not have gotten to the emergency room in time if ChatGPT hadn’t insisted.

Hundreds of millions of people now visit ChatGPT every week for wellness advice, according to its creator, OpenAI. At the beginning of January, the company announced the launch of a new platform, ChatGPT Health, which, according to it, offers enhanced security for the sharing of medical records and data. It joins other AI tools such as My doctor friend promising to work with patients to navigate health care.

Doctors and patients say AI is already having a profound impact on both the way patients receive information about their health and the ability of practitioners to diagnose and communicate with their patients.

Unlimited time to engage

There is a saying in medicine: “If you hear hoofbeats, think of horses, not zebras.” » In other words, the most obvious problem is usually the problem. This is often the default approach to diagnosis for time-pressured physicians.

“I’ve heard a number of patients say, ‘Well, guess what? I’m a zebra,'” says Dave deBronkart, a cancer survivor who has survived cancer. written on patients are using AI to help with medicine.

Unlike doctors, ChatGPT has almost unlimited time to conduct an exhaustive patient survey. deBronkart says he often hears stories about AI identifying symptoms that differentiate unusual or rare conditions from more common illnesses.

Additionally, he points out, AI diagnostic catalogs go beyond generalized medical knowledge. “It turns out my doctors are really good with horses,” deBronkart says. “They just don’t know all the special things.”

A new type of patient

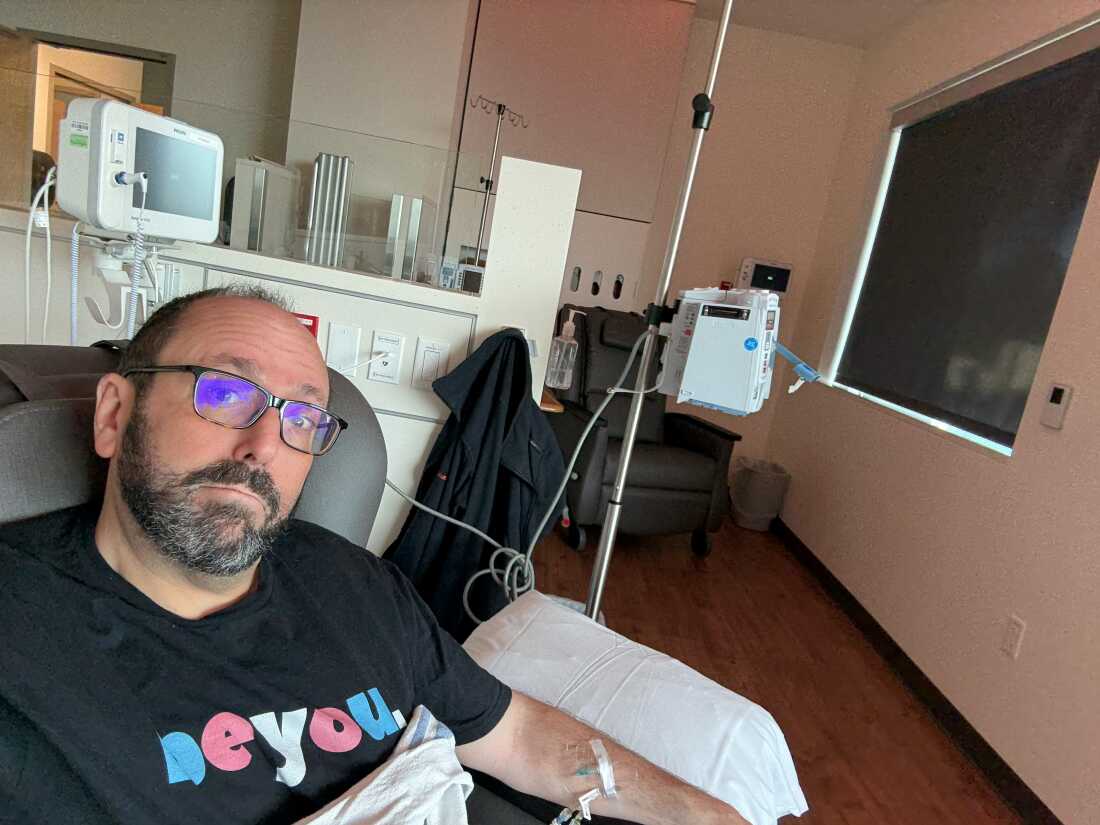

Burt Rosen is using AI to help manage the symptoms and treatment of the two different types of cancer he has been diagnosed with.

Burt Rosen

hide caption

toggle caption

Burt Rosen

Many patients report using different AI platforms to support their daily well-being and chronic disease management, complementing monitoring from healthcare professionals.

Sixty-year-old Burt Rosen, who works in marketing for a local Oregon university, uses it to manage the symptoms and treatment of the two types of cancer he has been diagnosed with, clear cell renal cell carcinoma and a pancreatic neuroendocrine tumor.

“I’m like, ‘I went to the cancer store to buy one and I get a free day,'” he jokes.

Recently, Rosen says, he told AI that he suffered migraines and nausea after sleeping. AI asked him what position he slept in and suggested he use two pillows instead of one. The pressure can increase while lying down, he explains, and cause migraines.

His headaches are gone.

Rosen also uses it to track her symptoms over time to find correlations with diet or other triggers, or to understand the range of treatment options. He frequently shows her the test results and asks her to translate them into understandable English.

A favorite trick, Rosen says, is to have AI write in Jerry Seinfeld’s voice — something that’s fun but also makes information about his illness more memorable. “I mean, just one cancer is bad enough!” reads a recent translation of Seinfeld. “But secondly, what’s the problem?”

Rosen says AI has changed the relationship he has with his oncologist.

“When I go to a doctor’s appointment, I’m no longer going to ask him to explain my tests or my condition,” he says. “My doctor’s appointment is much more of an action planning session.”

Risks of trusting AI

The list of unanswered questions and potential dangers of using AI in medicine is a long process.

As a consumer product, ChatGPT Health is not regulated by health privacy laws. how a medical provider’s systems work in a clinical environment

When it comes to mental health, OpenAI is currently cited in several active projects. lawsuits alleging psychological harm, including allegations related to suicide.

Patients and doctors emphasize that AI does not replace the doctor and that it is dangerous to consider it as such. Doctors say that without clinical oversight, misdiagnosis, misleading advice or human misunderstandings are significant problems.

Dr. Robert Wachter, chair of the Department of Medicine at the University of California, San Francisco – author of the forthcoming book One giant leap, how AI is transforming healthcare and what it means for our future – says he saw the risks with his own eyes. Wachter recounts a recent case of an RN advising a patient to try the antiparasitic drug ivermectin as a treatment for testicular cancer.

“It probably wouldn’t hurt you, but what would hurt you is not getting appropriate, treatable treatment for your cancer,” he says. “So the capacity for wickedness here is quite high.”

In one documented casea 60-year-old man consumed sodium bromide and suffered paranoia and hallucinations after consulting ChatGPT about reducing his salt intake.

Despite these dangers, Wachter is optimistic about the contributions AI can make to healthcare and believes the benefits will eventually outweigh the dangers, if they haven’t already. “I actually think it will be a very good thing,” he says.

Studies show that large language models are competitive with humans in simulated tests of diagnostic reasoning. A study published in the New England Journal of Medicine found that AI systems could frequently identify difficult cases; A follow up comparison with a leading human diagnostician showed a slight human advantage. Still, Wachter says, “the AI’s performance was remarkable.”

Wachter says AI has already significantly improved his own work and that of his colleagues. He now uses a tool called AI Scribe that allows him to look his patients in the eyes while they speak. “Two years ago, I would have been sitting there pecking away at my computer.”

Within months, he says, he also saw widespread adoption among his colleagues of a tool called OpenEvidence – “a sort of ChatGPT for doctors,” which gives them comprehensive knowledge at their fingertips.

“I use it all the time,” he says. “We all do it.”

The future of healthcare

Patients and doctors using AI in healthcare say the speed at which it is integrating into the system is astounding. “AI is already an integral part of my care team,” Rosen says.

At 60, Rosen admits he has unusual technological knowledge. The next generation of patients and doctors, he observes, will not have the same learning curve. “In two generations,” he said. “No one will think twice about it.”

Medicine and health care in the United States is unique, Wachter says, in that the system is deeply flawed and so badly in need of help.

“If you ask me, what do you think about AI in general, I’m worried,” he says. “I worry about the impact this will have on our politics, counterfeits, jobs – all these things are real,” he says. “It’s just in the corner of the world where I work, I see a system that is collapsing and can’t meet people’s needs without this kind of help.”